Indeed

At a Glance:

As a UX Research Intern, I led a project to improve the information architecture of Indeed’s hiring platform

Using a heuristic analysis, survey, and card sorting activity, I determined the optimal structure for 48 user settings in one central hub

My recommended architecture led to design that ultimately became the current structure of Indeed’s hiring platform settings

Project Details:

Role: UX Researcher

Time Frame: 3 months

Skills:

Heuristic Evaluation

Competitive Analysis

Survey Design

Card Sorting

Tools:

Optimal Workshop / OptimalSort

Qualtrics

Miro

UserTesting.com

Challenge

Indeed is the #1 job site in the world, serving over 300M jobseekers and 3M employers globally. The platform has multiple employer products that historically have operated separately. There is no consistent experience for employers to manage individual, team, or company settings. Feedback from sales teams and general consensus from product owners finds managing settings to be a challenging, fractured experience for employers.

Research Goal

Determine the optimal structure for a global settings framework to deliver a unified experience across products at Indeed.

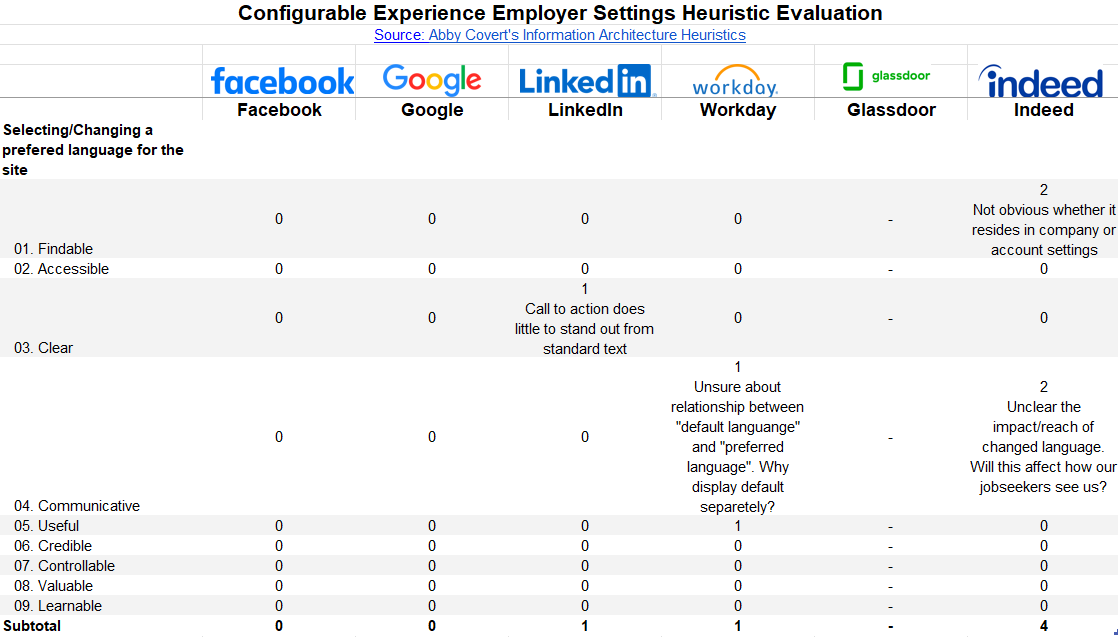

Heuristic Evaluation / Comparative Analysis

Indeed is not the only organization with multiple products housed within one platform, so I began this research by assessing the landscape of settings across the industry. Using a previously conducted audit of Indeed’s setting as a starting point, I conducted a heuristic evaluation of our current settings structure and compared its usability against other organizations. Analysis featured five competitors included top tech companies (e.g. Google) and industry peers (e.g. Workday), each of which housed settings for multiple purposes in one central hub.

The ease with which six standard settings tasks could be completed was measured using Abby Covert’s Information Architecture Heuristic Framework. Competitors were graded against Indeed on a 5-point scale and qualitative notes were captured to provide additional context.

Heuristic Severity Rating: 0 = no usability issues; 4 = severe usability issues requiring immediate resolution.

Analysis:

I aggregated scores across the six tasks for each competitor, ranking them from most usable to least, and comparing Indeed’s score against them.

findings:

Indeed had the least favorable score across each of the six measured tasks.

The unclear distinction between company and account settings on Indeed negatively affected findability settings

Indeed had multiple settings repeated in different locations and the relationship between them is often unclear

Many competitors included descriptions communicating the impact of modifying a setting (e.g. changing setting X will also affect Y)

These findings validated the need for a unified settings experience and provided a blueprint for what a cohesive settings structure could look like.

Survey

While the heuristic evaluation provided a look at how Indeed’s settings fared from a best practice perspective, additional data from Indeed’s Clients Success (CS) team could help back up claims with quantitative data. CS representatives interact directly with employers daily and 7% of their calls deal with account maintenance issues. To identify specific recurring pain points CS reps deal with most often, I conducted and distributed a 10-question Qualtrics survey.

The survey sought to better understand:

The settings topics CS reps came across most often

An estimated frequency of such issues

The primary cause of such issues

Analysis:

The survey received 67 responses during its one week of availability. After calculating standard statistical values with the quantitative data, I used a thematic analysis technique to group open-ended responses into bins representing the setting/group of settings being referred to.

Findings:

CS reps typically dealt with settings-related issues multiple times a week

Company Profile and Subscriptions were the most common challenges mentioned by respondents.

Navigation was cited as the most common cause of issues

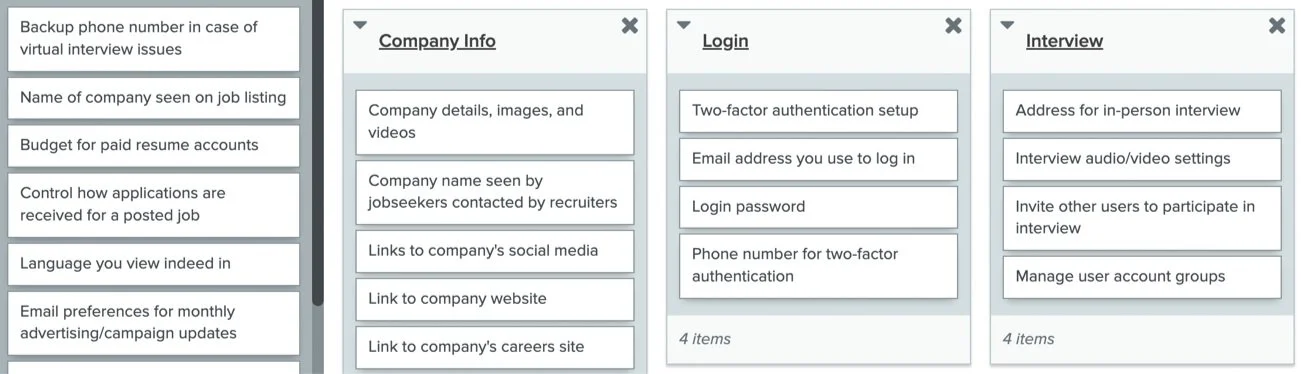

Card Sorting

With the problem identified and validated with data, I started to work on research that could directly inform solutions.

Using the 48 settings from the aforementioned audit, I conducted a series of card sorts through OptimalSort. I hosted eight moderated, open card sorting sessions, two with client success representative and six with enterprise employers. Enterprise employers are the most frequent users of Indeed products, making them ideal candidates for this activity. I asked participants to organize the 48 settings into groups that made sense to them, labelling the groups and describing their thought process as they progressed.

Recruitment:

I Identified participants by querying our database of enterprise users and manually selecting potential participants that created a pool diverse in company size. Challenges with no-shows and a quickly approaching deadline led me to develop an unmoderated version of the card sort suitable for UserTesting.com. Potential participants had to pass a screener to be eligible for the study. This method resulted in an additional 15 completed sorts.

Analysis:

I employed standardization grids and similarity matrices pre-built in OptimalSort to help locate patterns in the data. I identified natural breaking points in the percentages of sorts that grouped certain cards together, highlighting closely affiliated settings. For settings with less consistent groupings, I performed qualitative analysis of the user-generated group titles and comments made during moderated sessions, identifying the best fits.

Findings:

Respondents often grouped settings according to how they experienced them on other sites like Google and Workday

Nearly every respondent included a group similar to “My Account” and the cards included in this group have significant overlap

Group title variations signaled a need for content design assistance

Insights

As research concluded, overarching themes and insights helped guide next steps. From my individual research findings and my widened knowledge of the business over the course of my internship, the following facts became evident:

Indeed’s existing settings structure was costing Indeed money through the opportunity cost of CS reps resolving the same avoidable issues

The settings a user desired in a given instance often existed, just not always in the location they would expect, leading them either to select the incorrect setting or contact CS support

In addition to the development of a central settings hub, adding subheadings and a one-sentence description to each setting, describing its impact and relation to other settings, would resolve many of the smaller recurring issues.

Recommendation

As a final deliverable, I developed a recommended architecture for employer settings. This solution took into account not only results of research, but technical constraints mentioned in conversations with developers and product managers. The recommended architecture also introduced headings and subheadings that were missing from the original design.

Impact

My research led to designs that will ultimately become the new architecture of employer settings for Indeed’s hiring platform shortly after the conclusion of my internship. This project helped our team meet an important objective focused on building a cohesive experience across platforms. As a result of this research, we anticipate a reduction in Client Success cases dealing with account maintenance.

Next Steps

To create ongoing impact of this initial research, I recommend the following:

Tree testing – As a validation check to the proposed architecture, tree testing should be conducted with enterprise employers.

Further collaboration with Small/Medium-sized Business (SMB) researchers – Settings offerings for enterprise employers differ from those of small/medium sized businesses. Slight modifications may need to be made to fit the needs of this user base as well.

Usability testing of design – As a result of my research, a proposed design was created by my UX Designer teammate. Usability testing on this new design should be conducted.

Lessons Learned

Moderated card sorts are a cheat code – These sessions acted as a 2-in-1 card sort and interview. I learned a lot by probing on users’ thought processes after they had completed the sort.

Developer collaboration early in the process is critical – Understanding the technical constraints and reasoning behind certain architectural decision reduces time wasted revising recommended solutions.

Plan for a 30-40% no-show rate – Although we offered incentives and sent multiple reminders, this project struggled to stay on track as a result of a high no-show rate during card sorting. This impact was mitigated by including unmoderated sessions, but developing a version suitable for unmoderated completion required additional time.